The Illusion of Speed: Why AI Is Making Teams Faster—but Not Better

We’re shipping more code than ever, yet delivery performance and quality remain flat. The real bottleneck hasn’t disappeared—it has moved.

Two weeks ago, I built an MVP for StrengthsOS in under 12 days. At the same time, I started rewriting Octolaunch from scratch. That’s not the interesting part.

The interesting part is that this is becoming normal.

What used to feel like exceptional productivity is quickly turning into baseline. Features that once required days of focused work now emerge in hours. Entire systems can be scaffolded in a single sitting. The barrier between idea and implementation has almost disappeared.

And if you look at most engineering teams right now, the signals all point in the same direction: more output, faster cycles, and shorter time to code.

So why does it feel like nothing is actually improving?

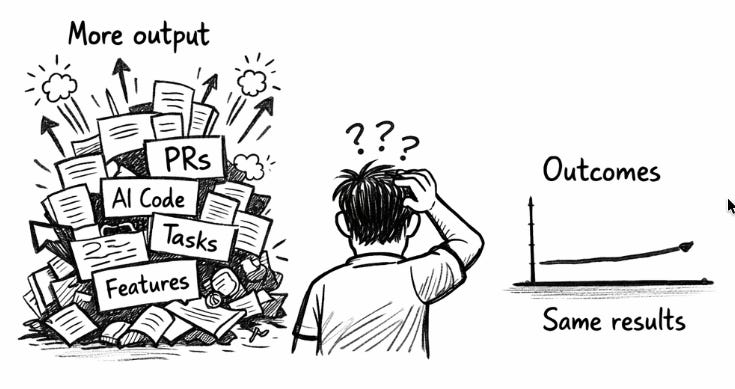

Output is exploding; outcomes are not

Across teams, the pattern is hard to ignore.

Developers are shipping more code than ever before. Pull requests are increasing, backlogs are moving faster, and the visible indicators of productivity are trending up. On dashboards and status reports, it looks like acceleration.

But when you step back and look at the system as a whole, the picture becomes less convincing.

Quality is not improving in a meaningful way. Delivery performance remains largely unchanged. In many cases, rework is quietly increasing. The system absorbs more activity, but it does not translate into better outcomes.

This is not just anecdotal. Early data is starting to surface a contradiction. Some studies show developers feel significantly more productive—reporting perceived speed increases of over 50% when using AI tooling. At the same time, controlled experiments on complex tasks show performance can actually degrade, particularly for experienced engineers. And broader delivery metrics show little to no improvement in throughput, with stability in some cases declining.

At the individual level, everything feels faster.

At the system level, nothing really moves.

That is the paradox.

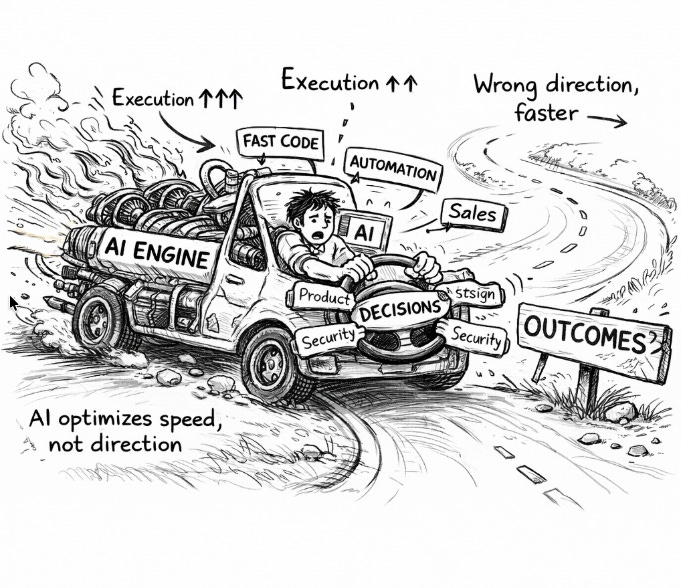

AI optimized execution, not decisions

The reason becomes clearer when you separate two layers that are often conflated: execution and decision-making.

AI has dramatically improved execution. Writing code, generating tests, exploring implementations—these activities are now cheaper, faster, and easier than ever before. The friction that once slowed down development has largely been removed.

But the hardest part of engineering was never execution.

It was deciding what to build, why it matters, and how it fits into a broader system of constraints. It was aligning multiple stakeholders with different incentives. It was designing systems that could evolve without collapsing under their own complexity.

None of that has changed.

Modern engineering is not a linear pipeline; it is a network of interdependent decisions spanning product, design, security, compliance, and operations. Value is created—or destroyed—at the boundaries between these domains. And those boundaries are still governed by coordination, judgment, and trade-offs.

Research consistently shows that as organizations scale, these coordination costs grow faster than the teams themselves. More stakeholders, more dependencies, more alignment overhead. In many environments, the majority of time is already consumed by communication, meetings, and context switching rather than actual building.

AI does not remove this complexity.

It simply makes it easier to execute whatever decision has already been made.

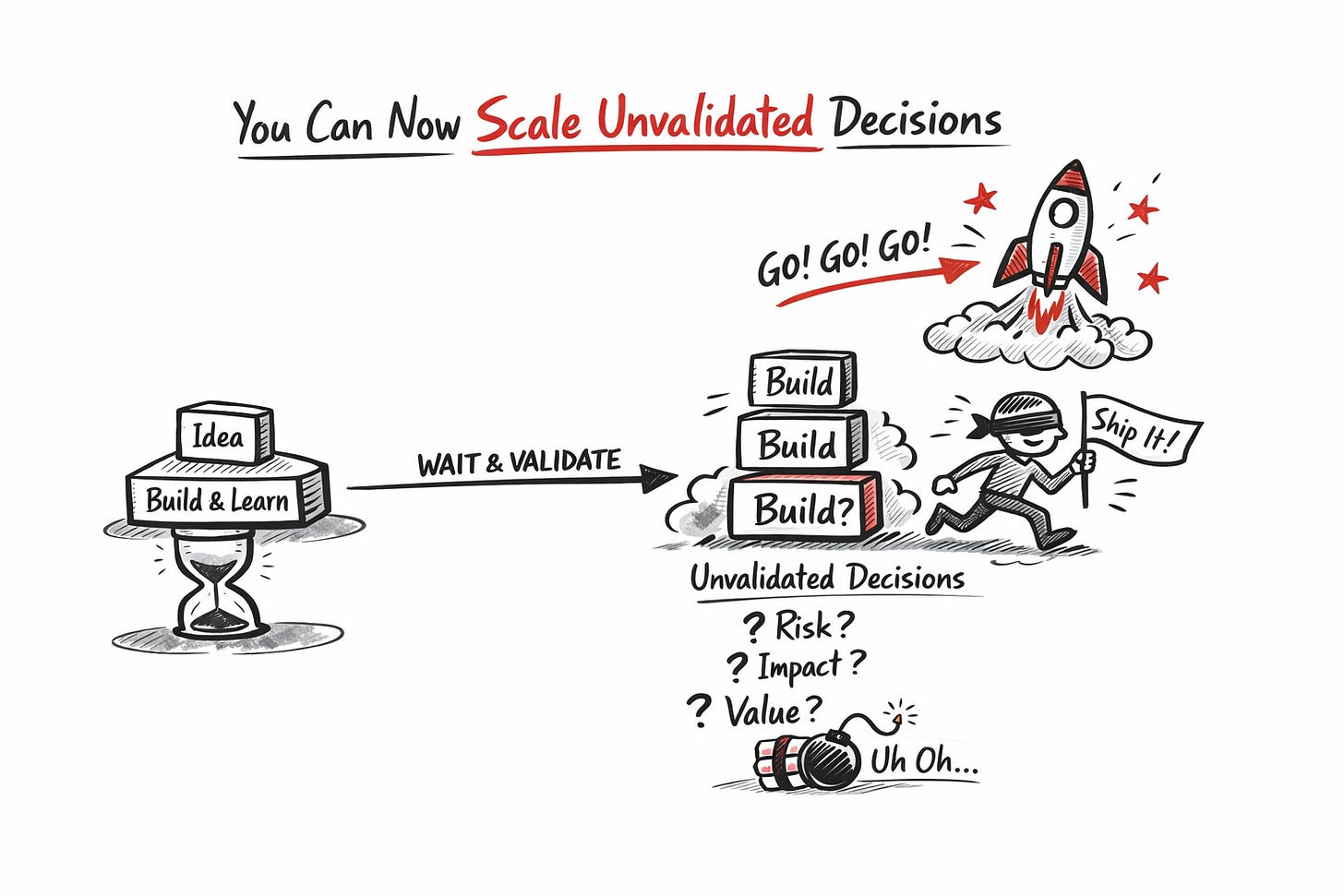

You can now scale bad decisions

This is where the dynamic becomes dangerous.

When execution was expensive, it didn’t just slow teams down—it created space for validation. Building something took time, and that time allowed reality to catch up. Teams could observe how features behaved in the wild, understand trade-offs, and adjust direction before moving forward.

That constraint is now gone. Execution is nearly instantaneous, but validation is not. Customer feedback still takes time. Business impact still takes time. Understanding whether something actually works still takes time.

So we’ve created a mismatch. Decisions are made quickly. They are implemented even faster. But they are validated at the same speed as before.

That means teams are now chaining decisions that haven’t proven themselves yet. A feature is extended before its value is clear. A direction is reinforced before it’s tested. An assumption becomes a roadmap before it becomes evidence.

What used to be a sequence of build → learn → adjust is quietly turning into build → build → build. We are not just scaling bad decisions. We are scaling unvalidated ones. And the more we accelerate execution without closing that validation loop, the weaker the connection becomes between what we build and the value it creates.

More output doesn’t mean more impact. It just means we are moving faster on assumptions we haven’t yet earned the right to trust.

Conclusion: The bottleneck has moved

For years, engineering was constrained by execution. Now it isn’t. We can build faster than ever before. But we cannot validate, align, or decide any faster than the systems around us allow.

And that creates a new kind of bottleneck.

Not in code.

Not in tooling.

But in understanding.

The ability to decide what matters.

The ability to align around it.

The ability to validate it before scaling it.

That is now the limiting factor.

And most teams are still optimizing for the layer that stopped being the constraint.

In the next issue, I’ll break down where the real bottleneck is hiding—and why most organizations are not designed to handle it.

Hello! This article is very insightful.💯

It’s interesting to realize that decision-making isn't necessarily improving. Even with so many software development lifecycle frameworks, the core issue remains the same: ROI.

I think the main problem is a lack of metrics or impact measurement.

There are even teams and product teams who have never conducted a VOC (Voice of the Customer) assessment, or have any develop form of realiable metrics. 🤯

I suspect the industry will remain enamored with AI for a while, and it will take time before teams reach the same conclusions you’ve mentioned here.

Hola Álvaro!

Do you have some real life stories to share that explore this bottleneck shift, and its consequences in a real life project?

Email me and let's get you on the show.