The Real Bottleneck in Engineering: Why AI Didn’t Fix What Slows Teams Down

AI made execution faster, but decision-making, alignment, and validation are still limiting performance. Here’s where engineering teams actually lose time.

For years, we optimized engineering speed.

We invested in better tooling, faster CI/CD pipelines, cleaner architectures, and platform engineering capabilities that reduced friction across the delivery lifecycle. Entire organizations reorganized around improving developer productivity, shortening lead times, and increasing deployment frequency. The assumption was simple: if we could make engineering faster, everything else would follow.

And for a long time, it did.

But something subtle has changed.

Today, teams can build faster than ever before. AI has further reduced the cost of execution, compressing hours of work into minutes and making exploration almost free. Yet despite this acceleration, most organizations are not seeing a proportional improvement in outcomes. Delivery performance is not dramatically better. Quality is not consistently higher. In some cases, stability is even degrading.

That mismatch is not accidental.

It’s a signal that we are still optimizing for a constraint that does not exist.

Engineering is no longer the bottleneck

For most of modern software development, execution was expensive.

Writing production-grade code required time, coordination, and deep expertise. Testing, integrating, and deploying safely introduced additional layers of friction. Improving these areas had a direct and measurable impact on performance, which is why so much focus was placed on optimizing them.

Frameworks like DORA reinforced this approach by showing how capabilities such as deployment frequency, lead time, and change failure rate correlated with high-performing teams. The industry responded accordingly—investing heavily in automation, DevOps practices, and platform engineering to remove bottlenecks in delivery.

Recent observations across teams show that even as execution becomes significantly faster—especially with AI assistance—system-level performance does not improve at the same rate. In fact, some studies suggest a more complex picture: while developers report feeling up to 55% faster when using AI tools, controlled experiments on complex tasks show that experienced engineers can actually become slower, as they spend additional time validating, correcting, and integrating AI-generated outputs. At the same time, broader delivery metrics show little to no improvement in throughput and, in some cases, a decline in stability.

This contradiction points to a deeper reality:

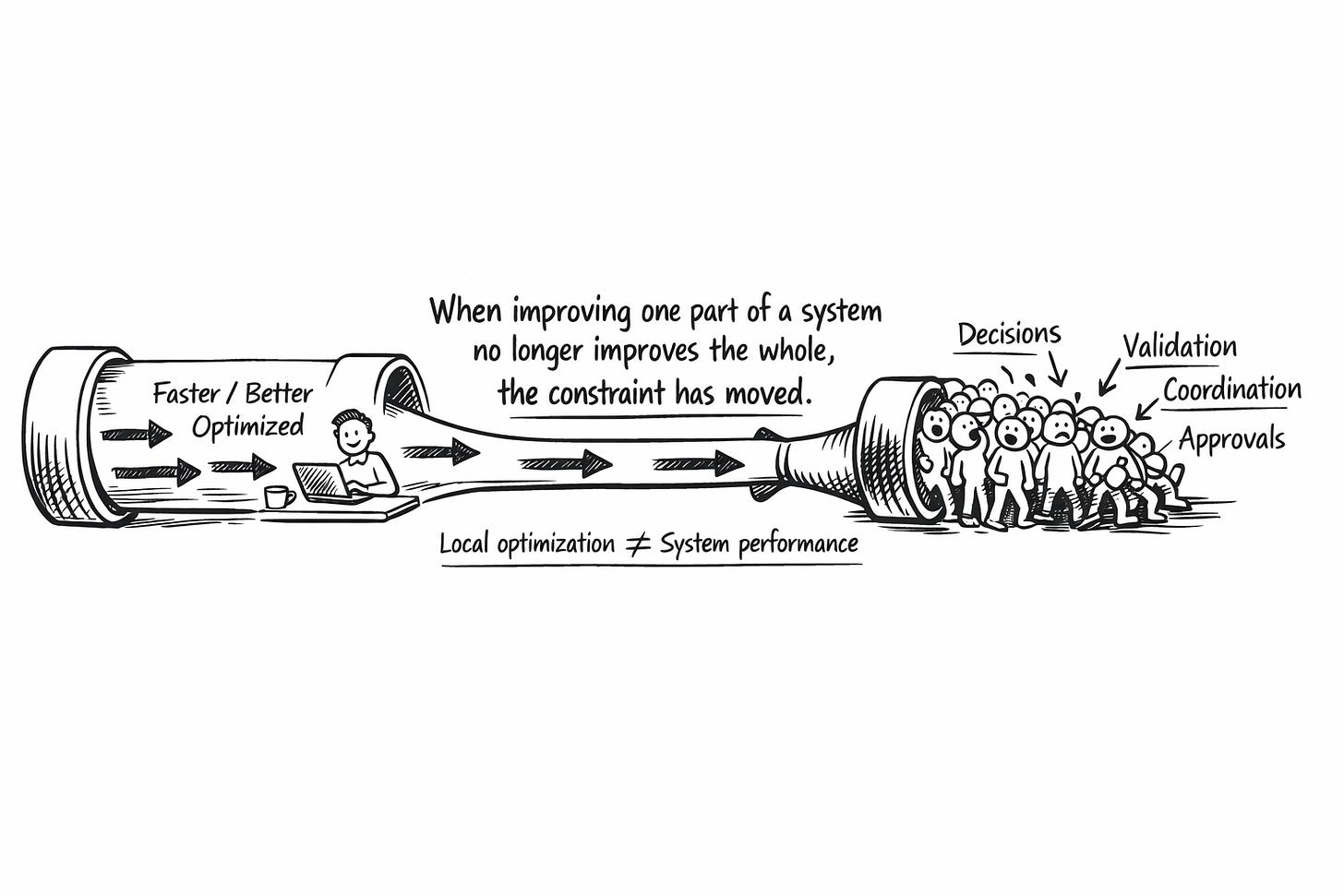

When improving one part of a system no longer improves the whole, it usually means the constraint has moved elsewhere.

The real bottleneck: coordination and decisions

Modern engineering is not a pipeline—it is a network.

Value is not created in isolation by writing code but through a continuous flow of decisions across multiple domains: product defining priorities, design shaping user experience, security enforcing constraints, compliance defining boundaries, and business stakeholders driving commercial direction. Every meaningful change in the system crosses these boundaries.

And at each boundary, coordination is required.

Research into organizational dynamics has consistently shown that as companies scale, collaboration overhead increases non-linearly. Studies on “collaborative overload” highlight how a small percentage of individuals, often in central roles like engineering leads and senior developers, become bottlenecks because they sit at the intersection of too many dependencies. At the same time, research on attention residue demonstrates that frequent context switching significantly degrades cognitive performance, reducing the quality of decision-making and increasing error rates of those individuals.

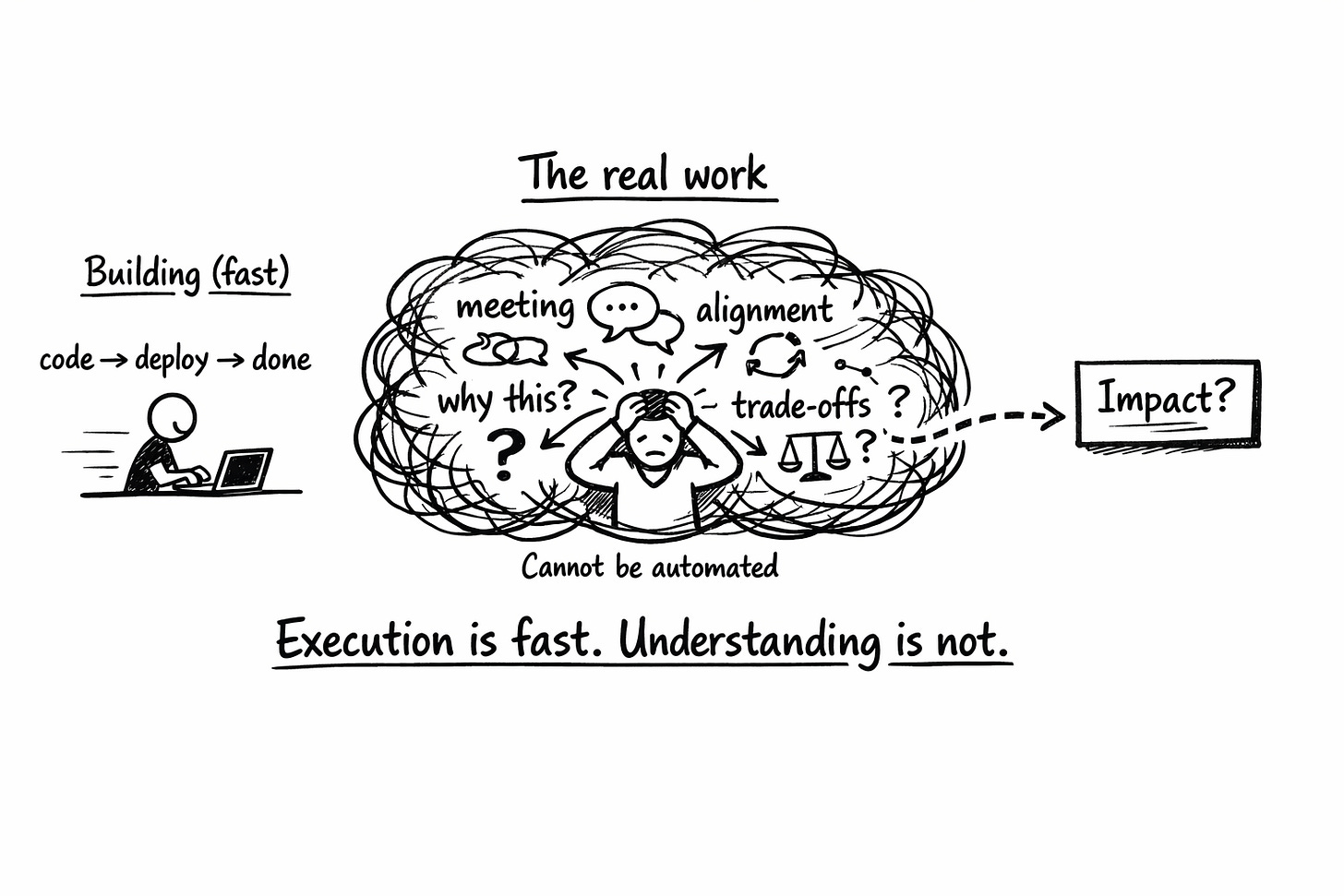

In practice, this means that a growing portion of engineering time is not spent building but navigating communication: meetings, alignment discussions, clarifications, and trade-offs.

This is where the real work happens.

Deciding what to build.

Aligning on why it matters.

Understanding how it fits into an evolving system.

And validating it affects the correct metrics in the expected way.

These activities are inherently slower, more ambiguous, and harder to optimize than execution. And unlike code generation, they cannot be easily automated.

This has always been and will continue to be a bottleneck, as it’s a system absorption problem and not a procedural optimization.

AI didn’t remove complexity—it accelerated exposure to it

AI has not simplified this system. It has intensified it. By dramatically reducing the cost of execution.

AI increases the rate at which teams can generate output. More ideas are explored, more features are started, and more changes are introduced into the system. On the surface, this looks like progress. But each of those outputs carries a hidden cost: it requires decisions, alignment, and validation.

And those layers have not accelerated because:

Customer feedback still takes time.

Understanding real impact still takes time.

Aligning multiple stakeholders still takes time.

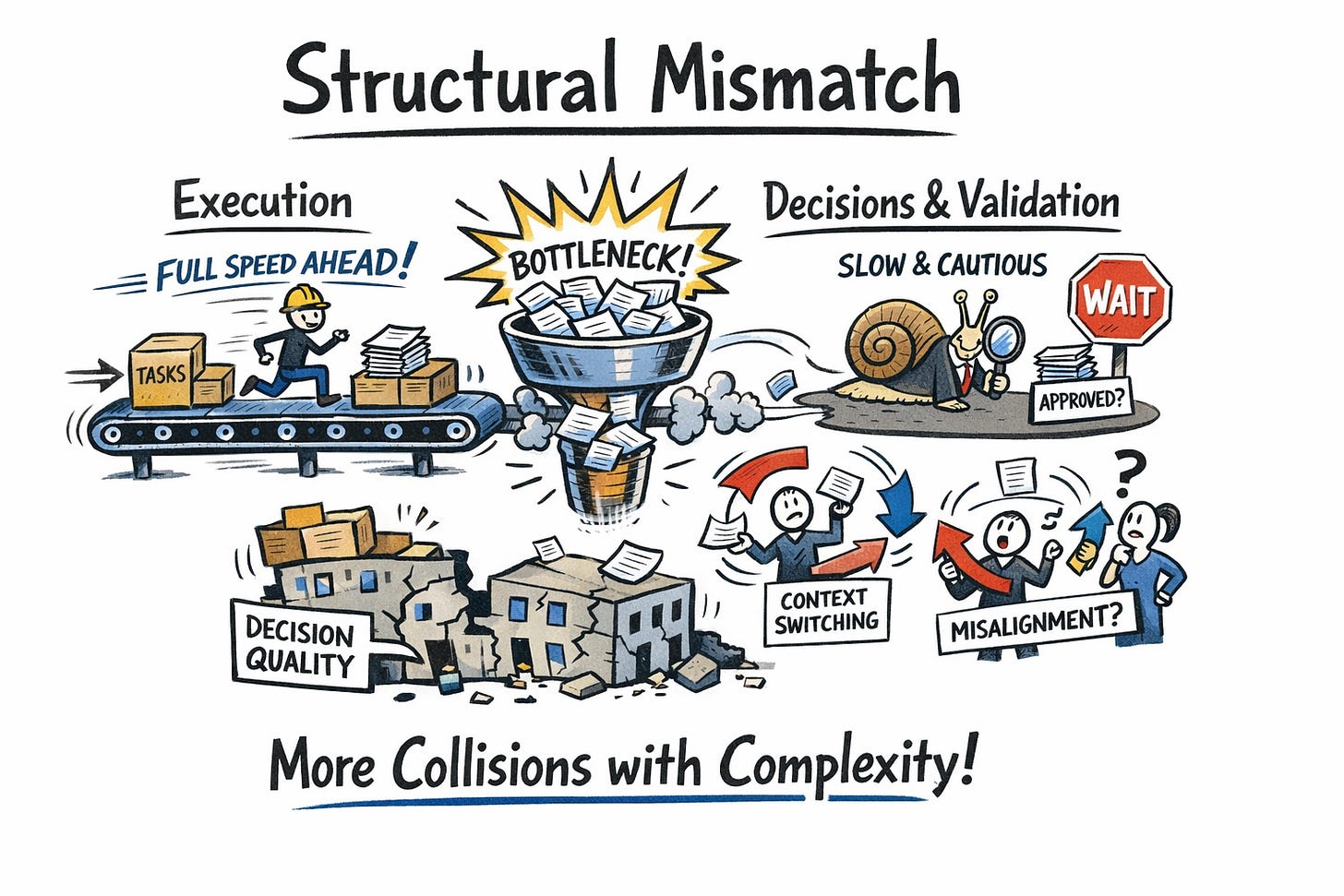

So what emerges is a structural mismatch:

Execution operates at one speed.

Decision-making and validation operate at another.

The result is predictable.

Teams hit the coordination bottleneck more frequently. More work enters the system than the organization can properly process. Context switching increases. Alignment becomes fragmented. And decision quality begins to degrade under pressure.

We did not remove complexity. We increased the rate at which we collide with it.

Conclusion: The constraint is now cognitive, not technical

For years, we thought the limiting factor in engineering was only technical.

How fast we could build.

How safely we could deploy.

How efficiently we could execute.

Today, that has been shown not to be the truth. The constraint is something less visible but far more fundamental: our ability to make good decisions, align across boundaries, and validate before scaling.

And most organizations are not designed for this.

They are still optimized for a world where execution was expensive and slow. A world where the primary challenge was building the thing.

That is no longer the challenge.

Now, the challenge is knowing whether the thing we are building should exist at all—and proving it before we scale it.